Lately I’ve been spending time on Mastodon for … reasons. Here’s my link: https://oldbytes.space/@blakespot. In my recently active time on the platform, I have found quite a few excellent retrocomputing-related posts by creative members of the community. One such post, by Dougall, links to his blog post entitled “Why is Rosetta 2 fast?“.

Rosetta 2 is the ahead-of-time compile translator that’s part of macOS Big Sur (and later) that, upon launch of an x64 Intel binary, translates it to 64-bit ARM code for execution on ARM-based Apple Silicon processors before execution. It is not a real-time emulator. It translates the entire binary — once — at launch time, making best-guess choices along the way. Dougall delves into various aspects of Rosetta 2 in an effort to explain why it is so performant; in many instances the translated binary runs faster on Apple Silicon than on the fastest Intel machines that Apple has ever released. It’s impressive.

It’s a fascinating read for a tech nerd like me that has a particular interest in OS technology. But one detail of the post really grabbed my attention.

Apple’s secret extension

There are only a handful of different instructions that account for 90% of all operations executed, and, near the top of that list are addition and subtraction. On ARM these can optionally set the four-bit NZVC register, whereas on x86 these always set six flag bits: CF, ZF, SF and OF (which correspond well-enough to NZVC), as well as PF (the parity flag) and AF (the adjust flag).

Emulating the last two in software is possible (and seems to be supported by Rosetta 2 for Linux), but can be rather expensive. Most software won’t notice if you get these wrong, but some software will. The Apple M1 has an undocumented extension that, when enabled, ensures instructions like ADDS, SUBS and CMP compute PF and AF and store them as bits 26 and 27 of NZCV respectively, providing accurate emulation with no performance penalty.

Intrigued by mention of this “secret extension,” I reached out to the author and asked if he could expand on what Apple has done here. And, he obliged. As he explained in his multi-part Mastodon response:

So, both the “adjust flag” (AF) and the “parity flag” (PF) come from the 8080-family CPUs from the 1970s. Today they’re almost completely unused. The parity flag is set if, in the last eight bits of the result, the number of set-bits is odd. Otherwise, it’s cleared. The adjust flag is set if there’s a “carry out” from the low four-bits of the addition (and otherwise cleared). This was used for binary-coded decimal – that flag would indicate a carry from one 4-bit digit to the next.

Both these flags are computed on every ADD or SUB instruction (extremely often), and 64-bit ARM has no such functionality. For example, to compute the parity flag on ARM, a subtraction turns into something like:

subs w4, w4, w5

dup v24.16b, w4

cnt v23.16b, v24.16b

umov w22, v24.b[0]

and w22, w22, #1

That’s a lot of work for one subtraction (5x as many instructions), and that’s not all of it – we didn’t compute AF.

But it’s not really a lot of work for Intel – in every x86 CPU, they just build some logic that computes both AF and PF at the same time as doing the subtraction. So, since Apple design their own CPUs, they decided to also build this logic. When running Rosetta 2, the CPU is configured to enable this functionality (since it’d break the ARM specification to have it enabled all the time). With that set Rosetta 2 does:

subs w4, w4, w5

And both flags are computed for it by the hardware.

Rosetta 2 can also run in Linux VMs on Apple Silicon. In a VM, it isn’t able to configured the host CPU, so it can’t use this functionality. There are two other options. Either, you can skip computing the flags, because they’re mostly useless and most software won’t care. Or you can compute them the long way shown above. Rosetta 2 chooses the second option, and this mostly works out fine, because they have an “unused flags” optimisation that avoids the computation a lot of the time.

This fascinated me. While few modern applications likely read these AF and PF bits, Apple — who make their own CPUs and can control the entire process — put in place a hardware step to increase performance when running translated x64 code on their own processors. I love it. Apple’s move here demonstrates the benefits of their position in controlling all aspects of the systems. A generic Snapdragon ARM SoC, say, would deliver notably less performance in this specific scenario — getting non-native apps running solid and fast during this transition period — that is critically important to Mac users.

I relish such minor details as this in the engineering of next generation hardware and wanted to share them with readers who might find a similar appreciation. It puts me in mind of some of the very use-case-specific innovations needed by Microsoft — but not Sony (and vice versa) — in the fascinating tale of the joint creation of the common PowerPC core used in the Sony PS3 and the Xbox 360 as told in Shippy & Phipps’ excellent book, The Race for a New Game Machine: Creating the Chips Inside the XBox 360 and the Playstation 3.

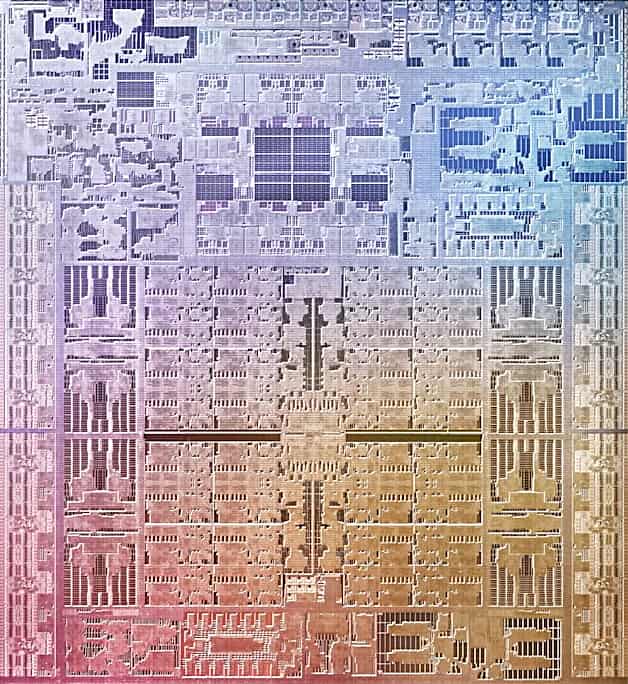

Dougall Johnson, who provided this insight into the M1 and family, is driving an effort to reverse engineer and document the Apple G13 GPU architecture (used by the M1) as well as to document the Apple Firestorm/Icestorm CPU microarchitecture. His work towards these ends can be found on GitHub at these respective links: Apple GPU, Apple CPU.

Performant is not a word.

https://dictionary.cambridge.org/us/dictionary/english/performant It sure seems like Cambridge disagrees with you.

Was that as embarrassing as it looked?

How is it possible to be such an idiot.

“Performant” is a secret language extension to accommodate an English Artifact. :-)

You got the Nazi part right

Pingback: Apple Silicon Supports 48-Year-Old Intel 8080 via Secret Extension - Tom's Hardware

Pingback: Apple Silicon, Gizli Uzantı Yoluyla 48 Yıllık Intel 8080'i Destekliyor - Dünyadan Güncel Teknoloji Haberleri | Teknomers

Pingback: 2022 - Apple Silicon unterstützt 48 Jahre alten Intel 8080 über Secret Extension - News Text Area

Pingback: Tech roundup 168: a journal published by a bot - Javi López G.

Pingback: Links 21/11/2022: pgAdmin 4 v6.16 Released and FSFE Exposed | Techrights

Just another example of the advantage Apple is able to wield by owning the whole stack. The M platform has a 3+ year lead on all other CPU manufacturers and it is quite astounding how Apple shunted away from Intel’s incompetence and implement an unrivaled CPU solution.

Pingback: Apple Silicon Supports 48-Year-Old Intel 8080 via Secret Extension - Techdigipro

This deep dive into Apple’s secret ISA extensions is absolutely fascinating—it’s amazing how much legacy overhead is still hiding in our modern silicon. It reminds me of how much optimization goes into the hidden mechanics of the games I play, like optimizing potion recipes for speed. For anyone currently playing Wizard Alchemy, I highly recommend checking out these Wizard Alchemy Codes to maximize your efficiency while you wait for the next big architecture breakthrough in our hobby.

Fascinating deep dive — the 8080 on Apple Silicon story is wild. For a retro meetup poster I used https://graffiti-generator.org to get an old-school tech zine vibe without opening Photoshop.